Every Abstraction Layer Erases an Apprenticeship

2026-05-10

Gen AI and the Socio-Technical Challenge of Expertise

There is a pattern running through the history of knowledge work: Every time we abstract knowledge work by wrapping it in a tool, a platform, a formula, or a model, we gain something measurable and lose something that doesn't show up on any dashboard until it's gone.

Gen AI is the latest and deepest instance of this pattern. But I don't think we can understand what's at stake without first stepping back to look at the full sequence.

I should say that this isn't entirely new thinking for me. In 2016, I led a small research project on digital disruption in Australian professional services. One of the things that came back clearly from that work was that the biggest disruption would be felt not at the level of firms or business models, but by individuals, particularly those early in their careers. My conclusion was that Automation and AI would increasingly augment practice in ways that changed the pathways into expertise. At the time that felt like a medium-term prediction. It is now immediate.

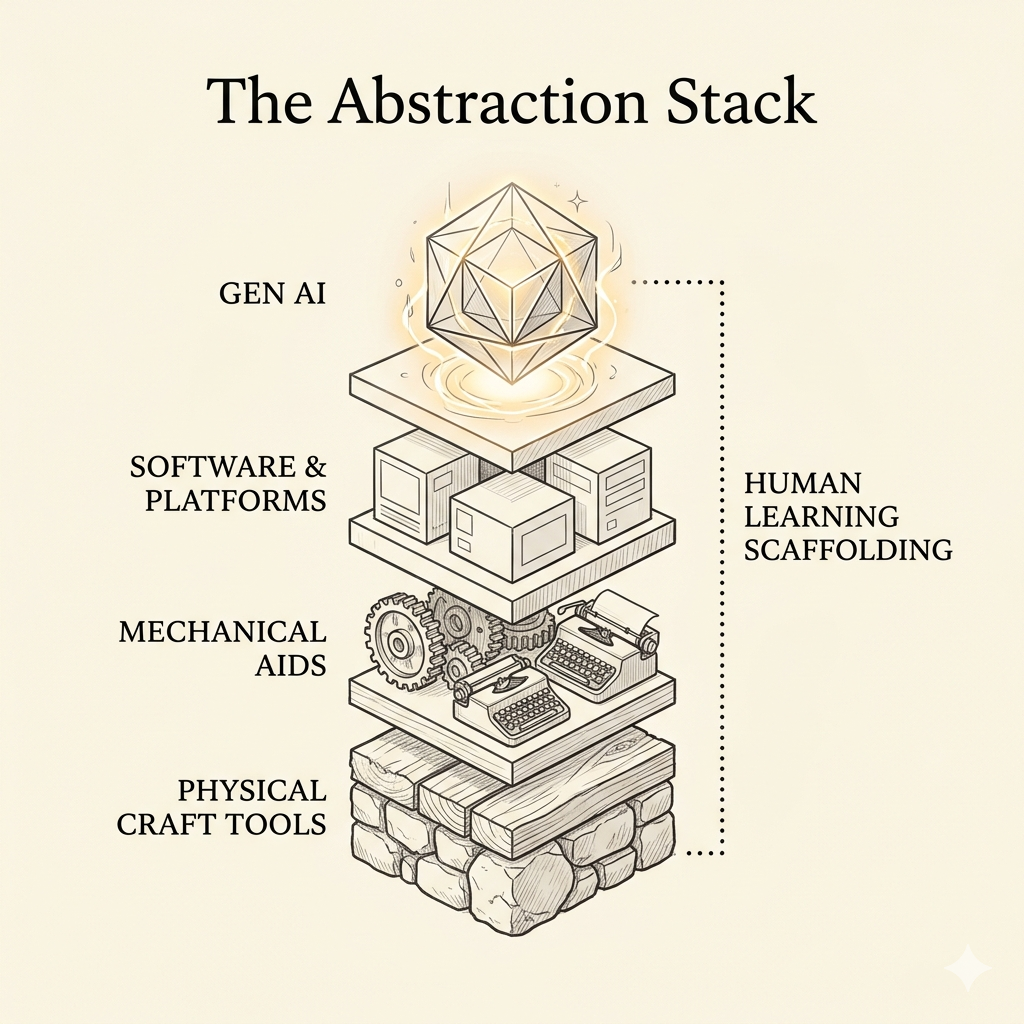

The abstraction stack

Consider the human "computers", which was a job category held predominantly by women, at NASA, at census bureaus, at actuarial firms. They didn't just do arithmetic. Through repetition, peer review, and the experience of being wrong and corrected, they developed numerical intuition. They caught errors not because they checked every figure, but because something felt off. That tacit knowledge lived in the people, not the process.

The spreadsheet didn't just automate the job. It eliminated the apprenticeship.

This is the pattern I want to trace. Each abstraction layer in knowledge work follows a similar logic:

- It removes friction from execution

- It raises the floor of what an average person can produce

- It obsoletes a category of cognitive labour that was previously the entry point to deeper expertise

- And it compresses the visible distance between novice and expert, making it harder to see that the distance still exists

The Abstraction Stack

From physical craft tools through mechanical aids, software, platforms and APIs, and now Gen AI, each layer abstracts more, delivers more, and quietly removes more of the social scaffolding that expertise was built on.

This is a socio-technical problem, not a technology problem

In the 1950s, Eric Trist and Ken Bamforth at the Tavistock Institute studied what happened when longwall coal mining was mechanised. The new technology was objectively more productive. It was also a disaster because absenteeism rose, accidents increased, morale collapsed.

What Trist and Bamforth found was that the mechanisation had optimised the technical system while inadvertently destroying the social system. The small, self-managing work groups that had evolved around the old method carried tacit knowledge, mutual accountability, and informal safety practices. Nobody designed those groups. Nobody documented what they did. The new technology simply assumed they were irrelevant, and so they disappeared.

The foundational insight of Socio-Technical Systems (STS) theory developed from that study is that technical systems and social systems are always co-designed, whether you intend it or not. Optimise one without considering the other, and you degrade both.

This is precisely what happens at each abstraction layer in knowledge work. The productivity case for the new tool is compelling and usually correct. The social system analysis is rarely done at all.

The spreadsheet case is instructive here. Calculation was never the scarce resource, insight was. When the spreadsheet freed analysts from arithmetic, the best organisations redirected that capacity toward interpretation, judgment, and decision-making. The social system adapted. The technical and social were, in STS terms, jointly optimised.

But in many organisations, the spreadsheet also created a new problem: people who could manipulate a model but had no feel for whether its outputs made sense. The numerical intuition that used to develop through years of manual calculation was simply... skipped.

What Gen AI abstracts and what disappears with it

Gen AI takes this pattern further than any previous layer, because what it abstracts is not arithmetic or typing or system administration. It abstracts the cognitive middle. That's the drafting, summarising, first-pass analysis, and pattern-matching across documents that used to be where junior minds were trained.

In their 2016 HBR piece "Collaborative Overload," Rob Cross, Reb Rebele, and Adam Grant identified that a small number of people carry a disproportionate share of an organisation's collaborative load and were being burned out by it. Their prescription was to redistribute that load across the network.

Today, we are seeing the 2026 evolution of this overload: "AI Brain Fry." A recent study by Boston Consulting Group (BCG) found that rather than redistributing the load, AI often adds a new layer of "surveillance labor." High performers are now spending their day in a state of hyper-vigilance, overseeing multiple AI agents simultaneously. This validates what we saw in my 2016 research: when we abstract the "reps" of junior work, the cognitive burden doesn't disappear, it just flows uphill, intensifying the load on the very experts already at the brink of collaborative overload.

Equally problematic is the fact that because Gen AI doesn't redistribute that load to less experienced professionals, it also removes the occasions for the collaboration that was doing the teaching.

Think about what used to happen around a piece of knowledge work:

- A junior analyst drafted a model. A senior reviewed it and said: that assumption doesn't hold in a downturn, here's why.

- A junior developer committed code. A senior reviewed it and said: this will break under load, let me show you.

- A junior consultant drafted a slide. A senior redlined it and said: you've described what happened, not what it means.

These interactions were not merely quality control. They were the primary mechanism by which expertise transmitted from one generation of practitioner to the next. The work was the occasion for the learning.

When Gen AI produces the first draft, that occasion disappears. The junior gets feedback on the AI's output, not their own thinking. The senior reviews something they didn't watch being built. The diagnostic conversation - how did you get here? - loses its referent.

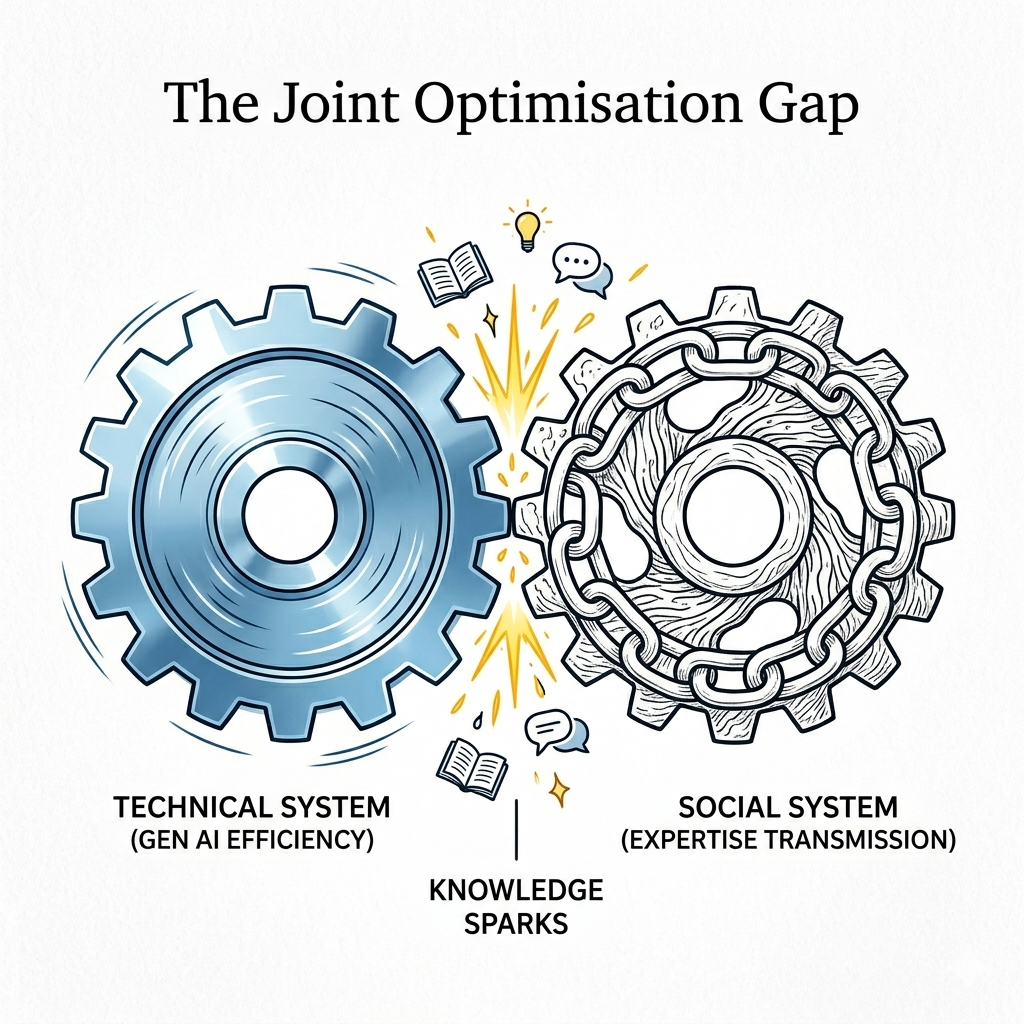

The Joint Optimisation Gap

This is the socio-technical failure hiding inside the efficiency gain. The technical system has been optimised, but the social system has been quietly redesigned by default. This includes the informal collaboration network, the expertise transmission mechanism, and the vital calibration that only happens through being visibly wrong.

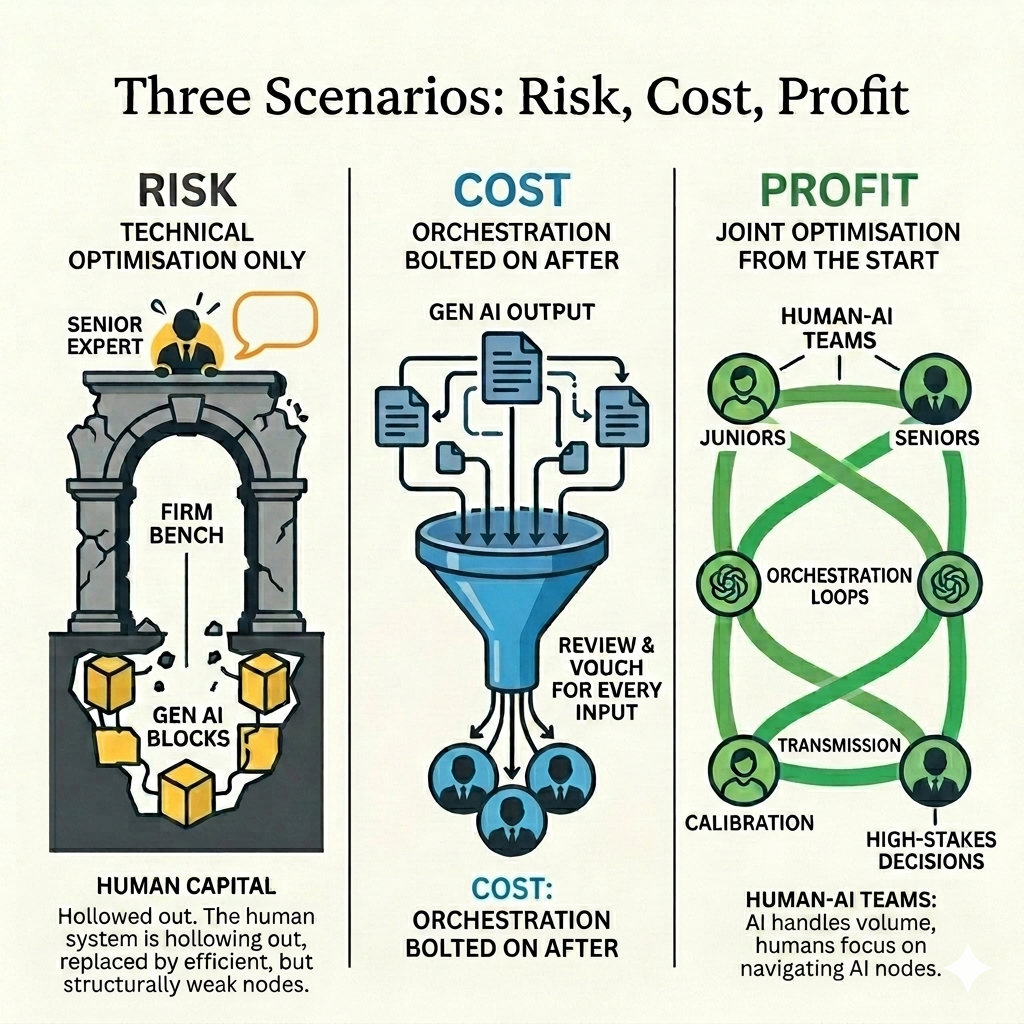

Three scenarios, not one outcome

None of this is inevitable. STS theory also gave us the concept of joint optimisation, a the design principle that tells us technical and social systems must be considered together, not sequentially. The question is whether organisations treat Gen AI deployment as a socio-technical design decision or simply as a procurement one.

In my experience, most treat it as the latter. Which gives us three recognisable scenarios:

Risk: technical optimisation only. The tool is adopted because the efficiency case is compelling. Nobody maps what informal collaboration it disrupts or what expertise transmission it removes. The junior cohort develops faster output and shallower judgment. Errors that used to be caught in review now propagate, because the reviewer's pattern recognition was built on watching humans make mistakes, not on auditing AI outputs. The organisation looks productive. Its bench quietly hollows.

Richard Susskind has argued that there is a meaningful difference between training and exploitation in professional services, but most business models quietly prioritise the latter. Gen AI, deployed without socio-technical design, makes this structural bias worse. The junior is more useful immediately and less capable over time. The firm gets the exploitation and foregoes the training. The individual pays the price.

Cost: orchestration bolted on after. The organisation recognises the problem and attempts to solve it by adding human review. But the people qualified to review AI outputs at depth are the same overloaded senior people that Cross et al. identified a decade ago. They now carry a new tax: prompting, reviewing, and vouching for AI work on top of everything else. The efficiency gain is real but smaller than projected. The bottleneck has not moved - it has a new job title.

Profit: joint optimisation from the start. The organisation asks first: what is our binding constraint? If the constraint is volume, speed, or access, abstraction genuinely solves it, and the social system can adapt. If the constraint is judgment or expertise, the organisation deliberately preserves the collaborative occasions that build those things. Developmental work is routed to humans who need the reps. AI handles execution; humans own calibration, challenge, and transmission. The tool amplifies the right things.

Three Scenarios: Risk, Cost, Profit

The profit scenario is not simply "use AI thoughtfully." It requires a genuine diagnosis of what the binding constraint actually is and an honest accounting of what the social system was doing that the technical system cannot.

The orchestration question

STS theory also emphasised responsible autonomy, which is the capacity of a work group to self-regulate, adapt, and maintain its own standards without constant external intervention. Gen AI disrupts this in a specific way: it introduces a non-human actor into the work group that cannot self-regulate socially. It has no stake in the outcome. It does not learn from being wrong in this organisation, with these people, on this problem. It cannot carry the informal knowledge of what matters here.

This means someone has to carry all of that. They will have to orchestrate the boundary between what the AI does and what the human system does, and do so with enough expertise to know when that boundary is in the wrong place.

I've been calling this the human orchestration problem. It's not a new idea and STS theorists would recognise it immediately as a boundary management question. But it's newly urgent, because the boundary is now between human and non-human cognition, not just between departments or roles.

The organisations that get this right won't just avoid the hollowing-out problem. They'll develop something genuinely scarce: the institutional knowledge of how to jointly optimise human and AI systems in their specific context. That's not a feature. It's not a setting. It's a capability and it's built through deliberate practice, visible mistakes, and the collaboration that happens around the work.

Which brings me back to something I wrote in 2016 at the end of that professional services research: the importance of building the capacity to engage purposefully with digital tools rather than simply be subject to them. I called it digital resilience and was thinking then about social media and automation. The phrase needs updating for this moment.

Digital resilience in the Gen AI era is not about using the tools well. It is about understanding what the tools are doing to the social system around the work, and making deliberate choices about what to protect. That requires enough self-awareness to ask: what am I actually learning here, and what am I outsourcing? It requires organisations to ask: what does our AI deployment do to the pathway between junior and senior? And it requires all professions to take seriously the difference between Susskind's training and exploitation, and to decide which one it is actually investing in.

The pattern keeps repeating because the productivity gains are always real and always immediate. The losses are always slow, always invisible, and always social.

The question for Gen AI is whether we notice the pattern this time before the expertise we needed to notice it has already gone.

References

- Bedard, J., Kellerman, G., & Wigler, B. (2026). When Using AI Leads to “Brain Fry”. Harvard Business Review.

- Cross, R., Rebele, R., & Grant, A. (2016). Collaborative Overload. Harvard Business Review.

- Dellow, J. (2020). Let’s talk about collaboration and work management.

- Dellow, J. (2020). Solving the Collaborative Work Management Puzzle.

- Dellow, J. (2016). Digital Disruption in Professional Services - Final update.

- Susskind, R., & Susskind, D. (2015). The Future of the Professions. Oxford University Press.

- Trist, E. L., & Bamforth, K. W. (1951). Some Social and Psychological Consequences of the Longwall Method of Coal-Getting. Human Relations.

Note: I developed the ideas and diagrams in this post in conversation with Claude and Gemini. While the underlying thinking and socio-technical analysis are mine, the dialogue with these Large Language Models helped me refine the structure, synthesise my previous research, and visualise the core concepts.