The new mobile user experience canvas

2016-02-19

In 2012 I wrote a well-received guide to designing mobile apps for business - the diagrams from the report were published online under creative commons license.

While I recognised the tri-corder-like abilities of smartphones, the emphasis at that time was still really about asking people to consider how user would interact with the device's screen in the context of where and what they were doing.

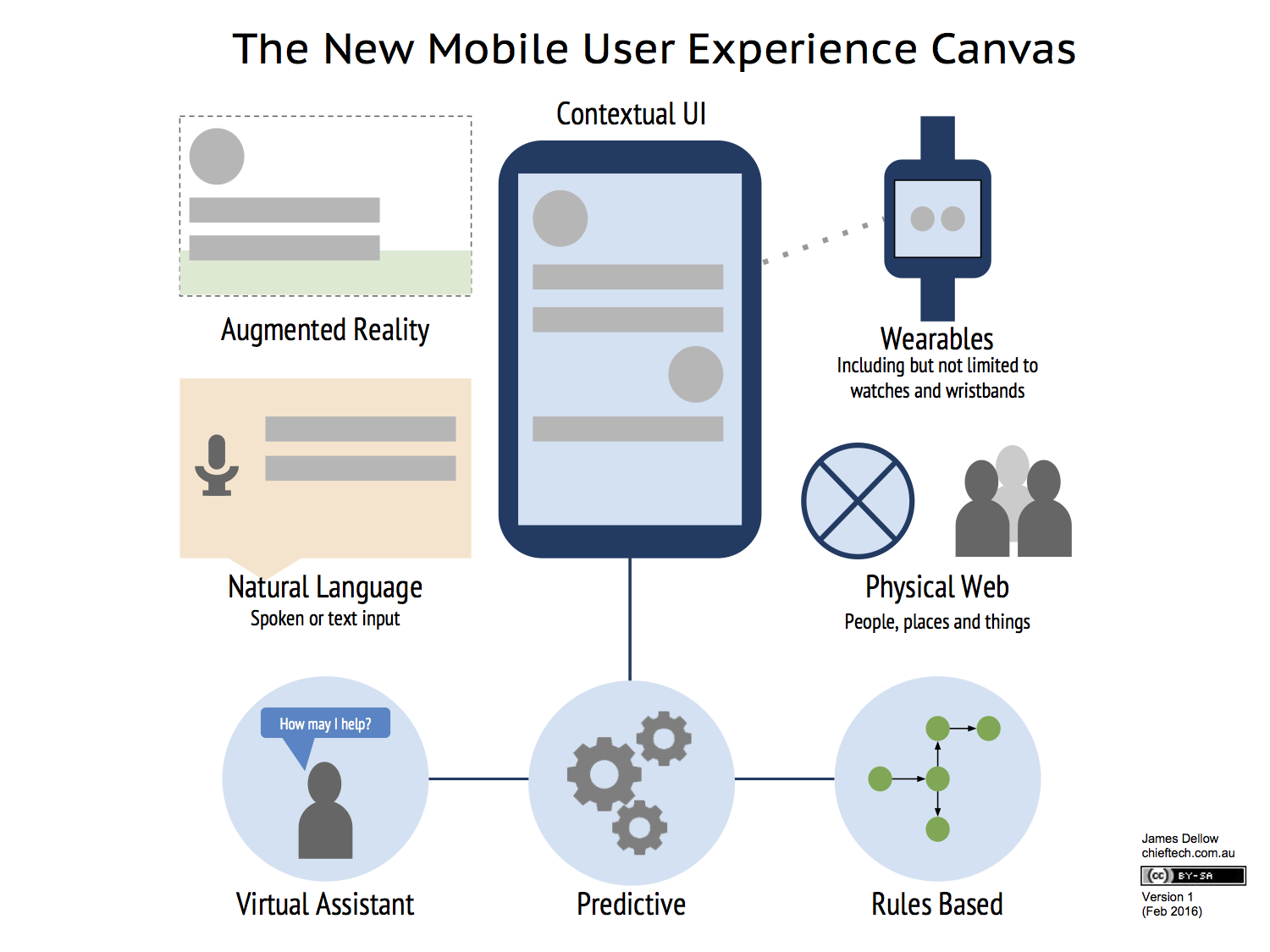

In a relatively short time since I wrote that guide a number of technologies have emerged into mainstream use, including wearables. As we look ahead to the design considerations for mobile computing in the near future, I think it is time to start shifting our focus away from the screen itself to other aspects of interaction.

The screen is not going away but in many cases the development of unique or sophisticated app interfaces will become less important to the total package of usability or user experience needed. Instead designers will spend more time considering where, when and how to make relevant information and actions available to the user through the multitude of primary and auxiliary interfaces. In some instances this interaction is spreading into the physical environment - for example mobile payments or unlocking door.

This new diagram provides an overview of that new mobile user experience canvas:

(Here is a PDF version.)

Some of these interactions could already be considered common place. But as the richness of this contextual functionality improves over time, this in turn will change people's interaction preferences.

One example of the more revolutionary new interaction possibilities that are emerging is MIT's Reality Editor. The Reality Editor uses a smartphone to combines the Internet of Things with Augmented Reality so people can connect and manipulate the functionality of compatible physical objects simply by pointing their camera at them.

REALITY EDITOR from Fluid Interfaces on Vimeo.

These emerging and new types of interaction will require us to think differently about how we design. Tanya Kraljic, a UX designer, recently described in an O'Reilly Design Podcast how designing for voice interaction differs from a graphical user interface:

There are a lot of principles of interaction design that apply to voice as well, but designing for voice or speech is really all about helping users; it's all about filling in the blanks for users, in a sense. When you design for, say, the GUI for a mobile application, you can be very deterministic. You decide what functionality you're going to enable. That functionality is easy to communicate to users, in a sense, because you put buttons and labels on the screen, and if there isn't a path for something or a button for something, then it's not available - or you might put something there but have it disabled, right? A user goes in there and it's pretty clear what they can and can't do. But, when you design for natural language, you're flipping that script.

Similarly designers are thinking about the user experience of bot personalities and design patterns for systems that use predictive analytics.

There is some overlap with the desktop or laptop user experience. Cortana is already available on the Windows 10 desktop and also iPhone and Android. But the scope of interaction on a large form device like a laptop will always be more constrained. A desktop or laptop device is also another thing in the physical web to interact with - Google Smart Lock for Chromebook is a simple illustration of that relationship.

Virtual reality headsets on the other hand - assuming we can get the form factor right and deal with other issues, like "VR sickness" - may even overtake the primacy of the smartphone as the interaction hub. But for now, lightweight virtual reality will mean our mobile phones remain the keystone technology.

Either way, this new canvas of mobile interaction gives us brand new design problems to solve and experiment with.